PHP is great at commerce. It’s terrible at heavy async work. Stop pretending otherwise.

If your ERP exports run on Magento cron, your pricing sync blocks checkout performance, and your shipment tracking retries live inside a plugin — you didn’t build a commerce platform. You built a monolith that happens to sell things.

I’ve seen this in project after project: teams push processing work into Magento because it’s there, it’s familiar, and nobody wants to introduce another language. The result is always the same — a system that gets slower, harder to debug, and more fragile with every integration you add.

There’s a better way. And it starts with drawing a line.

Why This Keeps Happening

Magento is a full-stack framework. It has cron, queues (via RabbitMQ or MySQL), observers, plugins, and a CLI. So when a new integration requirement comes in — sync prices from SAP, push orders to an ERP, track shipments from a carrier API — the path of least resistance is obvious: write a module, schedule a cron, call it done.

Here’s why teams keep making this choice:

- Familiarity. The team knows PHP and Magento’s DI container. Introducing Go means hiring or upskilling.

- Agency incentives. Agencies bill Magento hours. A Go microservice is out of scope, harder to estimate, and doesn’t fit the SOW.

- Platform defaults. Magento ships with

cron_schedule,queue_topology.xml, andMessageQueueConsumerInterface. It looks like it was designed for this. It wasn’t. - Short-term thinking. The first integration works fine in cron. The fifth one doesn’t. But by then, the architecture is set.

The problem isn’t that Magento can’t do async work. It can — technically. The problem is that it does it badly at scale, and every integration you add compounds the cost.

What Actually Goes Wrong

Cron Contention

Magento’s cron runner is a single-threaded scheduler. When you stack 15 custom cron jobs on top of indexers, cache warming, and queue consumers, you get overlap, missed runs, and unpredictable execution order. I’ve debugged production systems where pricing sync didn’t run for 6 hours because a catalog export job was stuck.

Memory and Process Limits

PHP processes are request-scoped by design. Long-running workers fight against PHP’s memory model. You end up writing gc_collect_cycles() calls and restarting consumers every N messages to avoid memory leaks. That’s not engineering — that’s life support.

No Native Concurrency

Need to sync 50,000 prices from an ERP? In Magento, you’re looping sequentially or managing brittle pcntl_fork hacks. In Go, you spin up a worker pool with goroutines and channels in 30 lines of code. The difference isn’t marginal — it’s orders of magnitude.

Retry Logic Becomes Business Logic

When your retry mechanism lives inside Magento, it entangles with the commerce domain. Suddenly your order export module knows about exponential backoff, dead-letter queues, and circuit breakers. That’s integration infrastructure masquerading as commerce code. It doesn’t belong there.

Where Go Fits — And Why

Go isn’t the answer to everything. But for a specific class of e-commerce problems, it’s the right tool:

- High-volume imports/exports. Catalog feeds, pricing syncs, inventory updates — anything that processes thousands of records on a schedule. Go handles this with minimal memory, native concurrency, and no framework overhead.

- Integration middleware. DTO mapping between Magento’s data model and external systems (SAP, NetSuite, Dynamics). Go’s strong typing and struct marshaling make transformations explicit and testable.

- Event-driven workers. Order placed? Shipment created? Go consumers can pick up events from RabbitMQ or Kafka, process them with retries and idempotency, and report back — without touching Magento’s runtime.

- Shipment tracking updates. This is a pattern I’ve extracted into its own service. A warehouse or 3PL drops CSV files with tracking numbers. A Go service watches the directory, parses each file, looks up the order in Magento via REST API, and pushes the tracking data to the shipment — with a worker pool for concurrency, retry with backoff on API failures, and file lifecycle management (processed vs. failed). In Magento, this would be a brittle cron job fighting PHP’s memory model. In Go, it’s a clean daemon that runs indefinitely with a 15MB binary. I open-sourced this as tracking-updater — it’s a good reference for what this boundary looks like in practice.

- Search indexing pipelines. Pushing data to Elasticsearch or Algolia at scale. Go can stream, batch, and retry without blocking Magento’s indexer queue.

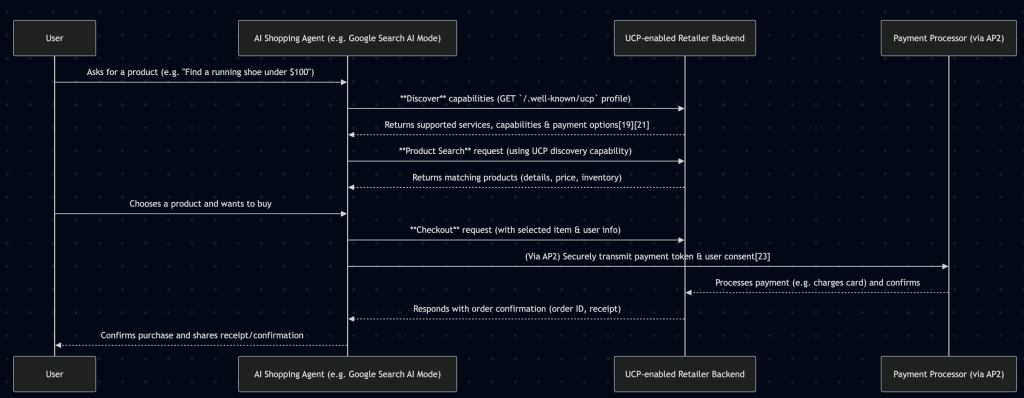

The pattern is consistent: Magento owns the commerce domain. Go handles the heavy lifting outside it.

Why Go Specifically?

- Compiled, single binary. Deploy a 15MB binary to a container. No Composer, no PHP extensions, no framework bootstrap.

- Goroutines. Lightweight concurrency that doesn’t require threading libraries or process management. The tracking-updater runs 5 concurrent workers processing files in parallel — each one independently calling Magento’s API. Try doing that reliably in a Magento cron job.

- Small memory footprint. A Go worker processing 10,000 messages uses less RAM than a single Magento CLI command bootstrapping the DI container.

- Explicit error handling. No silent failures. Every operation returns an error you must handle. This matters when you’re processing financial data between systems.

The Magento Side of This

When you extract processing into Go, Magento doesn’t disappear. It just gets focused.

Here’s what Magento does in this pattern:

- Emits events. An observer fires on

sales_order_place_afterand publishes a message to RabbitMQ. That’s it. No transformation, no retry logic, no external API calls. - Provides API endpoints. Go services call Magento’s REST API to read catalog data, update stock, or push shipment tracking. Magento serves the data — it doesn’t orchestrate the workflow.

- Exports payloads. A lightweight cron job serializes a batch of updated products into a queue message. The Go service picks it up and handles the rest.

The key insight: Magento becomes a data source and event emitter, not a processing engine. It stays fast, upgradeable, and focused on what it’s good at — rendering storefronts and managing commerce state.

Making the Boundary Clean

The hardest part of this pattern isn’t the Go code. It’s the interface between Go and Magento. Magento’s REST API is verbose — search criteria filters, nested extension attributes, inconsistent response structures. If every Go microservice hand-rolls its own HTTP client for Magento, you end up with duplicated auth logic, inconsistent error handling, and DTO structs scattered across repos.

That’s why I built go-m2rest — an open-source Go client library for Magento 2’s REST API. It covers products, orders, categories, attributes, carts, and configurable product management. Authentication (bearer token, admin credentials, customer credentials), automatic retries on 500/503, and typed Go structs for every Magento entity.

Instead of hand-crafting search criteria URL parameters, you call a typed method. Instead of parsing nested JSON responses into map[string]interface{}, you get proper Go structs with explicit fields. The tracking-updater uses it under the hood — one library handles the Magento boundary, every service on top of it stays focused on its own domain.

This is what “boundary thinking” looks like in practice: one clean interface between your Go services and Magento, not a dozen ad-hoc integrations.

Decision Checklist: Extract or Keep?

Extract to Go when:

- The job processes more than 1,000 records per run

- It requires retries, backoff, or dead-letter handling

- It talks to external APIs with rate limits or unreliable uptime

- It runs longer than 60 seconds consistently

- It needs true concurrency (parallel API calls, batch processing)

- The logic has nothing to do with commerce (DTO mapping, file parsing, data reconciliation)

Keep in Magento when:

- It’s tightly coupled to commerce state (cart rules, tax calculation, checkout flow)

- It runs in under 10 seconds and processes small batches

- It only reads/writes Magento data with no external system involved

- The team has no capacity to operate a second runtime

- It’s a one-off migration script, not a recurring process

Be honest about the last point. If your team can’t deploy and monitor a Go service today, the answer isn’t “force Magento to do it” — it’s “build the capability.” But it’s also not “rewrite everything in Go next sprint.”

The Leadership Question

If you’re a tech lead or CTO, here’s the real question: What’s the cost of running integration logic inside your commerce platform?

It’s not just performance. It’s:

- Upgrade risk. Every custom cron job and queue consumer is another thing that can break during a Magento version upgrade. A Go service that talks to Magento over REST? It doesn’t care what Magento version you’re on — the API contract is the boundary.

- Team bottleneck. Your Magento developers are writing retry logic instead of building commerce features.

- Debugging complexity. When an ERP sync fails at 3 AM, your on-call engineer needs to understand both Magento’s DI and SAP’s API. That’s two domains in one codebase. Separate services mean separate logs, separate deployments, separate failure domains.

- Scaling cost. You can’t scale integration workers independently of your web nodes. Need more processing power? You’re scaling the entire Magento stack.

Extracting heavy processing into Go services isn’t just a technical decision. It’s a business decision about where your team spends its time and how resilient your platform is under pressure.

The Line Is the Architecture

The most important thing you can draw in your system isn’t a class diagram or a sequence diagram. It’s a boundary.

On one side: Magento handles commerce — catalog, cart, checkout, customer, orders. On the other side: Go handles everything that isn’t commerce — transformations, integrations, heavy processing, external system communication.

That line is your architecture. Everything else is implementation detail.

Stop asking Magento to be something it was never designed to be. Let it do what it’s good at. And put the heavy lifting where it belongs.