Export raw data from Magento. Transform it somewhere else. This is the pattern.

Every Magento integration starts the same way. Someone writes a module that reads order data, reformats it into the ERP’s expected structure, and pushes it via API — all inside a cron job. It works for the first integration. By the third, you have a transformation layer, a retry mechanism, and error handling logic buried inside Magento’s runtime. You didn’t plan to build middleware. But that’s what you built — bad middleware, running inside your commerce engine.

There’s a cleaner approach, and it starts with accepting one thing: Magento should export raw data. Everything else — mapping, retries, failure handling — belongs in a dedicated middleware layer.

Why Integration Logic Ends Up in Magento

It’s the same story every time. A new integration requirement comes in — push orders to SAP, sync inventory from a WMS, send shipment confirmations to a 3PL. The team looks at what they have: Magento’s cron, its queue consumers, its DI container. Why introduce another service when you can write a module?

Here’s the trap:

- The first integration is always simple. One cron job, one API call, one format. It fits neatly in a Magento module.

- The second integration copies the pattern. Now you have two cron jobs with similar-but-different transformation logic.

- By the fifth, you have an unmaintainable mess. Retry logic is entangled with DTO mapping. Error states are logged to

var/log/with no alerting. Failed records get silently skipped because nobody built a dead-letter queue inside a Magento module.

The real problem isn’t technical — it’s organizational. Agencies scope Magento hours. Nobody budgets for middleware. So transformation logic grows inside the platform like mold behind drywall.

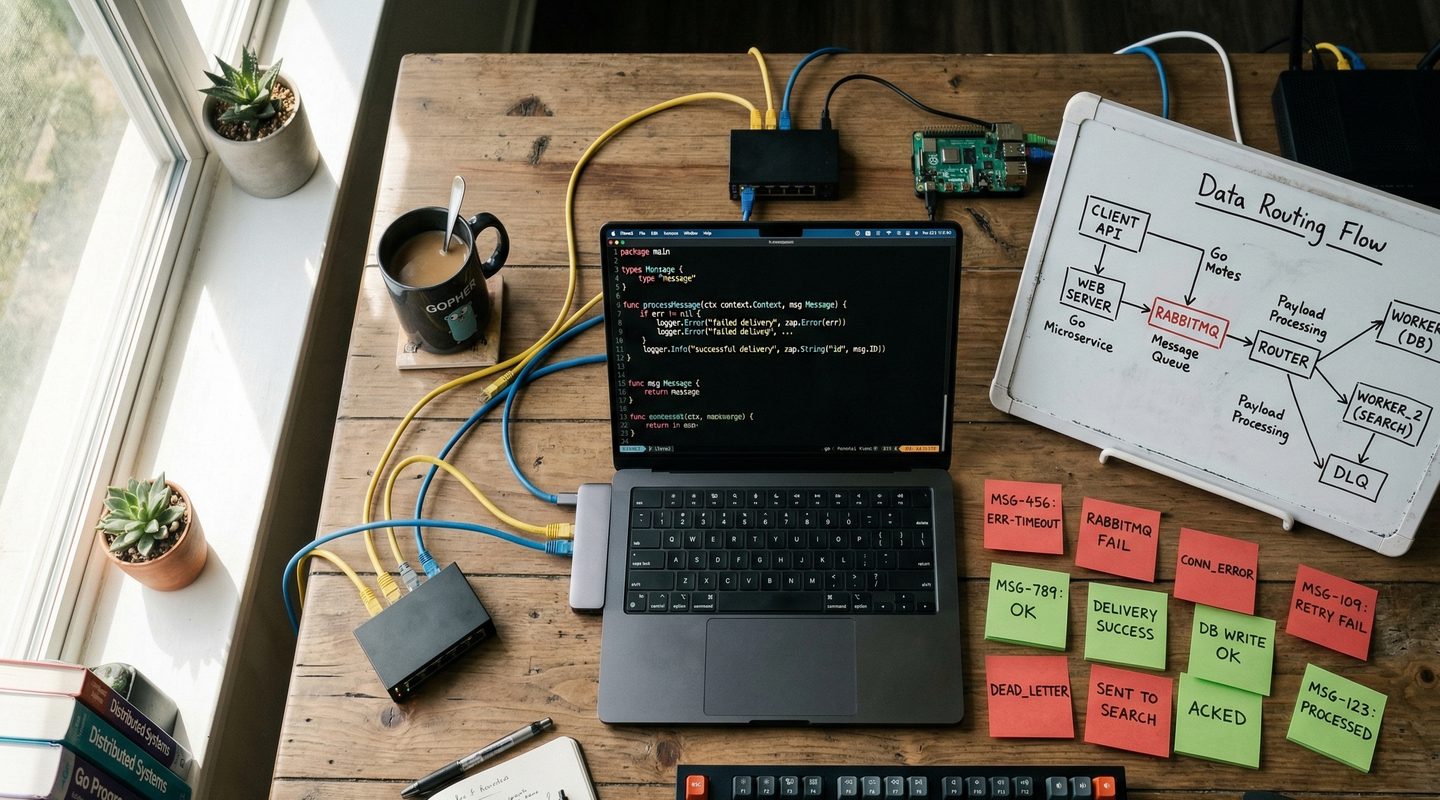

The Pattern: Export Raw, Transform Outside

The architecture is straightforward:

Magento exports raw commerce data. Orders, prices, inventory levels, customer updates — whatever the integration needs. The format is Magento’s native structure. No transformation. No ERP-specific fields. Just clean commerce payloads pushed to a queue or exposed via API.

Middleware receives, maps, and delivers. A Go service (or any dedicated middleware) picks up the raw payload, transforms it into the target system’s DTO, and pushes it to the ERP, WMS, or 3PL. This is where field mapping lives. This is where business rules for transformation live. And this is where retry logic belongs.

This separation has three immediate benefits:

- Magento stays focused on commerce. No SAP field mappings polluting your order export module. No ERP connection timeouts blocking your cron runner.

- Transformation logic is testable in isolation. You can unit test a DTO mapping function without bootstrapping Magento’s DI container.

- Each integration is independent. SAP’s retry policy doesn’t affect your shipping provider integration. Failures are isolated.

DTO Mapping: Keep It Boring

DTO mapping sounds trivial. It’s not — at scale.

An order in Magento has nested structures: items, addresses, payment info, custom attributes, extension attributes. An ERP expects a flat document with specific field names, date formats, and enum values that don’t match Magento’s. The mapping between these two is where most integration bugs live.

The rules are simple:

- One mapper per integration target. Don’t build a generic “transform anything” engine. You’ll end up maintaining a DSL nobody understands. Write explicit struct-to-struct mappings.

- Validate before sending. After mapping, validate the output DTO against the target system’s requirements. Catch missing fields and format errors before they become API failures.

- Version your DTOs. When the ERP updates their API, you change the mapper. The Magento export doesn’t change. The queue format doesn’t change. Only the last mile changes.

In Go, this is particularly clean. You define typed structs for both source (Magento payload) and target (ERP document), write a function that maps one to the other, and test it with table-driven tests. No reflection magic. No runtime surprises.

If you’re building Go services that consume Magento’s REST API, go-m2rest gives you typed structs and a search criteria builder out of the box — so you’re not hand-rolling HTTP calls and JSON parsing for every integration.

Retries and Backoff: The Non-Negotiable

External APIs fail. ERP endpoints go down for maintenance. Rate limits get hit during peak sync windows. If your integration doesn’t handle retries properly, you lose data.

But “just retry” is not a strategy. Here’s what actually works:

- Exponential backoff with jitter. Don’t hammer a struggling endpoint with fixed-interval retries. Back off exponentially, add random jitter to prevent thundering herd, and cap the maximum delay.

- Retry budget per message. Set a maximum retry count (typically 3-5). After that, the message moves to the dead-letter queue. Don’t retry forever — you’ll mask the real problem.

- Distinguish transient from permanent failures. A 503 is retryable. A 400 with a validation error is not. Retrying a permanent failure wastes time and obscures the root cause.

- Make retries idempotent. If you retry pushing an order to the ERP, the ERP must handle receiving the same order twice. This means idempotency keys, deduplication checks, or upsert semantics. Without this, every retry is a potential duplicate.

In Magento’s cron model, retry logic becomes a state machine managed in database columns — retry_count, last_attempt, status. It works until you have 50,000 queued records and your cron job takes 45 minutes to scan the table. In a Go service, retries are part of the message processing loop with in-memory state and configurable policies per consumer.

Dead-Letter Queues: Where Failed Messages Go to Be Fixed

A dead-letter queue (DLQ) is not a graveyard — it’s a triage room. Messages end up here when they’ve exhausted their retry budget. The DLQ gives you:

- Visibility. You know exactly which records failed and why. No more grepping through Magento logs hoping to find the error.

- Replay capability. Fix the root cause (bad mapping, API change, missing field), then replay the failed messages. No data loss. No manual re-entry.

- Alerting. A message hitting the DLQ triggers an alert. Your team knows something is broken before the business notices missing orders.

The pattern in practice: your Go consumer reads from a main queue (RabbitMQ, Kafka, SQS). If processing fails after N retries, it publishes the message to a DLQ topic with metadata — original timestamp, error message, retry count. A separate dashboard or CLI tool lets you inspect, fix, and replay.

I built this exact pattern into tracking-updater — failed shipment tracking updates move to a failed directory with full context, and can be reprocessed once the issue is resolved.

The Magento Side of This

Magento’s job in this pattern is minimal — and that’s the point.

- Emit events or queue messages when orders are placed, shipments are created, or prices are updated. Use Magento’s native

queue_topology.xmlto publish to RabbitMQ. Keep the message payload as Magento’s native data structure. - Expose REST/GraphQL endpoints for the middleware to pull data on demand. Let go-m2rest handle the API calls with built-in auth and retry.

- Don’t transform. Magento doesn’t know or care what format SAP expects. It exports commerce data. Period.

This keeps your Magento modules thin, upgrade-safe, and decoupled from every downstream system. When the ERP changes their API, you update the Go middleware. Magento doesn’t get a new deployment.

Decision Framework

Move integration logic to middleware when:

- You have more than one external system consuming Magento data

- Retry and error handling requirements differ per integration

- Transformation logic changes independently from commerce logic

- You need visibility into failed records beyond Magento logs

- Your cron runner is already contended with indexers and other jobs

Keep it in Magento when:

- It’s a single, simple integration with minimal transformation

- The team has no capacity to operate a separate service

- The data volume is low (hundreds of records, not thousands)

- There’s no retry or failure handling requirement beyond “log and move on”

The Leadership Question

Middleware sounds like additional infrastructure cost. It is — initially. But consider the alternative.

Every integration you embed in Magento increases deployment risk. A bug in your SAP mapper can block a Magento release. A stuck cron job can delay indexing. A retry loop can consume PHP workers that should be serving checkout requests.

The real cost isn’t the Go service. It’s the operational coupling. When your integration layer is separate, you can deploy, scale, and debug it independently. Your Magento release pipeline stays clean. Your commerce platform does commerce.

That’s not over-engineering. That’s drawing the right boundary.

Conclusion

The pattern is simple: Magento exports raw data. Middleware transforms, retries, and delivers. Failed messages land in a dead-letter queue where they can be inspected and replayed.

This isn’t a theoretical architecture diagram. It’s what happens when you stop treating Magento as your integration engine and start treating it as your commerce engine. The middleware layer — whether it’s a Go service, a dedicated integration platform, or even a managed queue with Lambda functions — is where transformation and reliability belong.

Draw the boundary. Keep Magento focused. Build your middleware to handle the mess that integration always is.