A 4-hour product import is a business problem, not just a technical one.

When your catalog update blocks pricing changes until 2 AM, when your PIM sync delays product launches by half a day, when your inventory feed takes so long that stock levels are stale before they’re live — that’s not a performance issue. That’s a business constraint masquerading as a technical limitation.

Most Magento teams accept slow imports as a fact of life. They shouldn’t. The problem isn’t Magento’s import capacity. The problem is that the import pipeline was never designed as a pipeline at all.

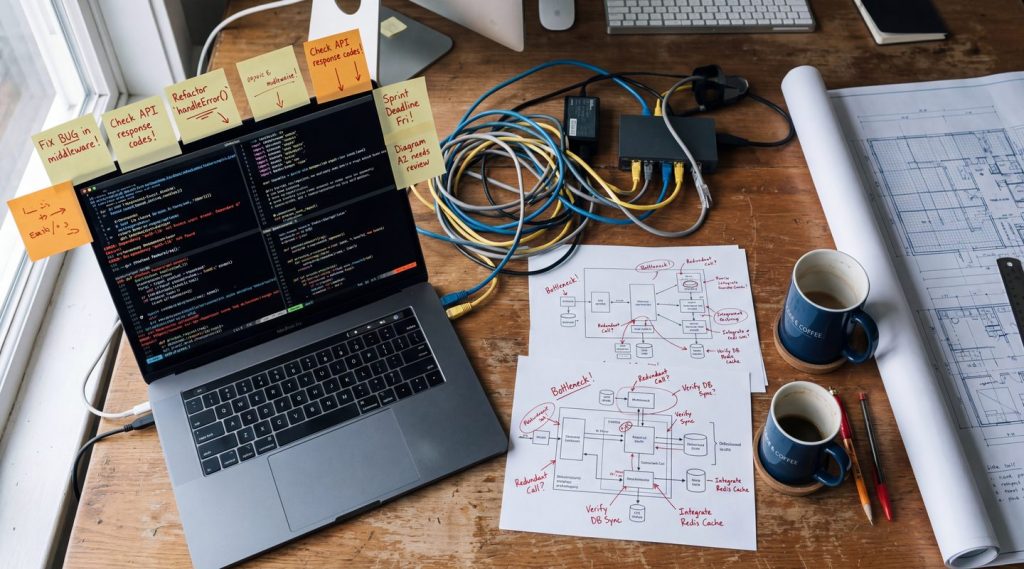

Why Imports Are Slow

The typical Magento import path looks like this: a CSV or XML file lands somewhere. A cron job picks it up. It reads the entire file into memory. It validates every row. It writes to the database row by row, maybe in small batches. If it fails at row 48,000 of 50,000, you start over.

This pattern has three fundamental problems:

- No concurrency. A single PHP process handles the entire file. One thread, one connection, one row at a time. On a 200,000-SKU catalog, that’s hours.

- No partial recovery. If the process dies — out of memory, database timeout, deployment restart — you lose all progress. The entire import reruns from scratch.

- No backpressure. The import doesn’t know or care if the database is under load, if the indexer is running, or if another import is already in progress. It just pushes.

This isn’t a criticism of Magento’s native import. Magento’s ImportExport module was designed for admin-triggered, occasional bulk operations. It was never intended to be the backbone of a continuous data pipeline.

The mistake is treating it as one.

The Import Pipeline Model

A well-designed import pipeline has distinct stages, each with its own concerns. Think of it as a factory line, not a single machine.

Stage 1: Ingest. Accept the incoming data. Parse the file or consume the API response. Validate the schema — not the business rules, just the structure. Reject malformed records immediately. Output: a stream of validated, normalized records.

Stage 2: Transform. Map external data to your internal format. PIM field names become Magento attribute codes. ERP price structures become tier pricing rows. Currency conversions happen here. DTO mapping — the same pattern from middleware design — applies directly.

Stage 3: Batch and dispatch. Group records into optimally sized batches. Too small and you pay overhead per request. Too large and a single failure is expensive. Dispatch batches to a pool of concurrent workers.

Stage 4: Write. Each worker sends its batch to the Magento REST API or directly to the database via a dedicated import service. Each batch is independently retryable. Each batch is independently idempotent.

Stage 5: Report. Track which batches succeeded, which failed, and which are pending. Provide a summary. Feed failures into a dead-letter queue for investigation and replay.

This is a pipeline. Each stage can be optimized independently. Each stage can fail independently without taking down the whole operation.

Concurrency: The Biggest Win

The single biggest performance improvement in any import redesign is concurrency. A Go service with a worker pool of 10 goroutines can process 10 batches simultaneously. That alone turns a 4-hour import into a 25-minute one.

But concurrency isn’t free. You need to manage it carefully.

Worker pool size. This depends on the bottleneck. If you’re writing to Magento’s REST API, the limit is what Magento can handle — typically 5 to 20 concurrent connections before response times degrade. If you’re writing directly to the database, the limit is connection pool size and lock contention. Start with 5 workers and measure. Double until throughput stops improving or error rates spike.

Rate limiting. Your import service should respect Magento’s capacity. If response times go above a threshold — say, 2 seconds per batch — reduce concurrency dynamically. This is backpressure in practice: your producer slows down when the consumer signals it’s overloaded.

Resource isolation. Run imports against a read replica’s connection pool or a dedicated database connection group if possible. Don’t let a bulk import compete with checkout traffic for the same database connections. This is an infrastructure decision, but it needs to be designed at the pipeline level.

Batching Strategy

Batch size is a trade-off between throughput and blast radius.

- Too small (1-10 records per batch): HTTP overhead dominates. You spend more time on connection setup and teardown than on actual data transfer. 10,000 products at 1 per request means 10,000 API calls.

- Too large (5,000+ records per batch): A single failure is expensive. If batch 47 of 50 fails, you’re retrying 5,000 records. Memory usage spikes. Timeouts become likely.

- Sweet spot (50-500 records per batch): Enough to amortize HTTP overhead. Small enough that retries are cheap. A failed batch of 200 records reruns in seconds, not minutes.

The right number depends on your payload size and API. For Magento REST API product updates, 100-200 per batch is a reliable starting point. For direct database writes, you can go larger — 500-1,000 — because you control the transaction boundaries.

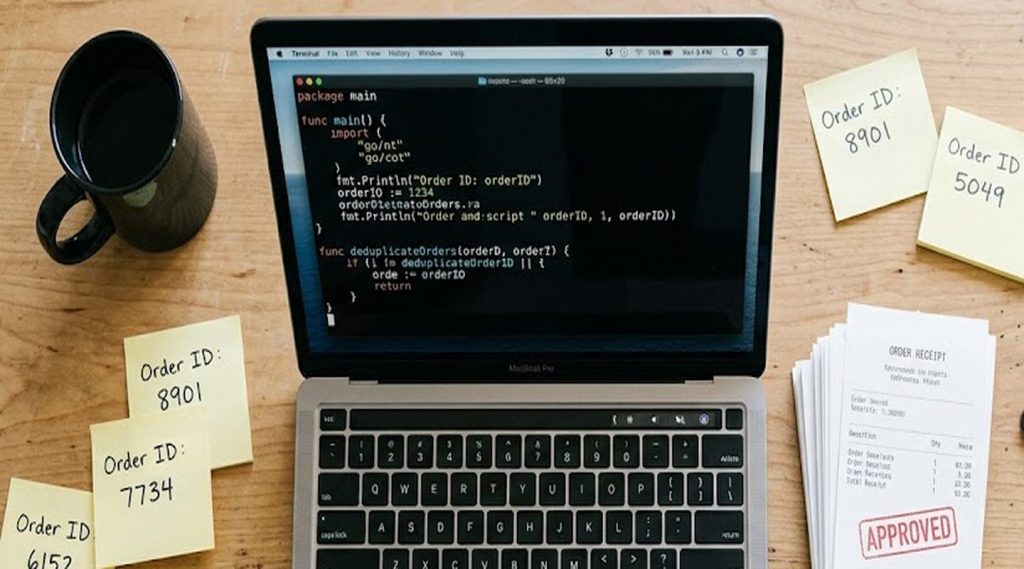

Partial Failures and Resumable Jobs

In a long-running import, failures are guaranteed. Network blips, database locks, Magento throwing a 500 on a specific SKU with invalid attribute data. The question is whether a failure in batch 47 means you redo batches 1 through 46.

It shouldn’t.

Every batch should be independently trackable. A simple state store — Redis, a database table, even a local file — records which batches have completed. When the import resumes after a failure, it skips completed batches and picks up where it left off.

This is the same idempotency principle from the previous article in this series, applied at the batch level. Each batch gets a deterministic ID derived from the import run ID and the batch sequence number. If the batch was already processed successfully, skip it. If it was partially processed or failed, retry it.

The result: a 200,000-record import that fails at record 150,000 doesn’t restart from zero. It resumes from batch 751 of 1,000, handles the 200 records that failed, and finishes in minutes instead of hours.

Streaming vs. Bulk

There are two models for feeding data into the pipeline.

Bulk file processing. A file arrives — CSV, XML, JSON — and the pipeline processes it end to end. This is the traditional model. It works well for scheduled imports: nightly catalog syncs, weekly price updates, monthly inventory reconciliations.

Streaming / event-driven. Records arrive continuously from a message queue or webhook. The pipeline processes them as they come, individually or in micro-batches. This works for real-time use cases: PIM publishes a product change, the pipeline picks it up within seconds.

Most production systems need both. The nightly bulk import handles the full catalog reconciliation. The streaming pipeline handles intra-day updates. The pipeline design is the same — ingest, transform, batch, write, report — but the ingestion stage differs.

In Go, this maps cleanly to the same worker pool pattern. Bulk mode reads from a file and feeds batches to the pool. Stream mode reads from a queue (RabbitMQ, Kafka, SQS) and feeds individual messages or micro-batches to the same pool. The downstream stages don’t care where the data came from.

The Magento Side of This

Magento’s role in a high-volume import architecture is narrower than most teams expect.

What Magento provides:

- REST API endpoints for product creation and updates (

/rest/V1/productsfor single,/rest/V1/productswith bulk operations for batches) - Async bulk API (

/rest/async/bulk/V1/products) for fire-and-forget batch operations with status polling - Import/Export module for admin-triggered CSV imports — useful for one-off operations, not for automated pipelines

What Magento should not do:

- Parse incoming files from PIM or ERP systems

- Manage retry logic, concurrency, or batch orchestration

- Track import progress or provide resumability

- Handle data transformation from external formats

The pattern: your Go import service calls Magento’s API. Magento receives clean, validated, correctly formatted payloads. Magento does what it does well — persisting catalog data, triggering indexers, invalidating caches. Everything else happens outside.

The go-m2rest library handles the Magento REST API calls — typed product structs, auth management, auto-retry on 500/503 responses. Layer your batch dispatch on top of it, and the Magento integration piece is solved.

Decision Framework

Build a Go import pipeline when:

- Your catalog exceeds 50,000 SKUs and import time matters

- You need daily or intra-day updates from PIM, ERP, or external feeds

- Your current import regularly fails and restarts from scratch

- You need concurrent writes that a single PHP process can’t provide

- Your team needs visibility into import progress and failure rates

Keep using Magento’s native import when:

- Catalog is small (under 10,000 SKUs) and updates are infrequent

- Imports are admin-triggered, not automated

- The team doesn’t have Go expertise and the import time is acceptable

- You’re in early project stages and simplicity outweighs performance

Batch size starting points:

- Magento REST API: 100-200 records per batch

- Magento Async Bulk API: 500-1,000 records per batch

- Direct database: 1,000-5,000 records per batch (with proper transaction management)

Leadership Angle

Import performance is often dismissed as an ops concern. It isn’t.

When your catalog update takes 4 hours, your merchandising team can’t react to market changes same-day. When your pricing sync is a nightly batch, you’re running tomorrow’s promotions on yesterday’s prices until the cron finishes. When a failed import means a full rerun, your engineering team spends mornings babysitting data pipelines instead of building features.

The cost model is straightforward. A Go import service running on a single container processes 200,000 products in 20-30 minutes with a 10-worker pool. The infrastructure cost is negligible. The engineering cost is a week of focused work to build the pipeline and the batch management layer.

Compare that to the cost of your current process: hours of blocked merchandising operations, manual monitoring of import jobs, repeated reruns after failures, and the invisible cost of a team that accepts “imports are just slow” as normal.

Fast, reliable imports are a competitive advantage. Not because they’re technically impressive, but because they make your business operations faster.

Conclusion

High-volume imports don’t need exotic infrastructure. They need a pipeline design that treats each stage — ingest, transform, batch, write, report — as an independent concern with its own failure handling.

Go gives you the concurrency primitives to parallelize the write stage. Idempotent batch design gives you resumability. Backpressure gives you safety under load. Together, they turn a fragile, hours-long cron job into a reliable pipeline that finishes before your first coffee.

Stop accepting slow imports as the cost of doing business with Magento. The platform can handle the throughput. Your pipeline just needs to be designed to deliver it.